17:56

17:56 What Is Neural Network?

Artificial Neural Network

A neural network is a computing system inspired by the human brain, consisting of layers of interconnected nodes (neurons) that process data. Each connection has a weight that's adjusted during training. Neural networks learn to recognize patterns, classify data, and make predictions.

How Neural Network Works

A neural network has three parts: input layer (receives data), hidden layers (process and transform), and output layer (produces results). Each neuron applies a weight, adds a bias, and passes through an activation function. Training adjusts all weights to minimize prediction errors.

Simple neural networks with 1-2 hidden layers handle tasks like spam detection and price prediction. Deep networks with dozens of layers handle complex tasks like image recognition (CNNs), language understanding (Transformers), and game playing (reinforcement learning).

Training a neural network means feeding it examples, comparing its predictions to correct answers, calculating the error (loss), and adjusting weights via backpropagation and gradient descent. This loop repeats millions of times until the model converges.

Why Developers Use Neural Network

Neural networks power nearly every modern AI application: image recognition (photos, medical imaging), NLP (translation, chatbots), recommendation engines (Netflix, YouTube), autonomous vehicles, and game AI. Developers use frameworks like PyTorch and TensorFlow rather than building networks from scratch.

Key Concepts

- Neuron — A node that receives inputs, applies weights and bias, and outputs a value through an activation function

- Layers — Input → hidden → output. Each layer transforms data, extracting progressively more abstract features

- Weights and Biases — Learnable parameters adjusted during training — weights determine connection strength, biases shift activation

- Activation Function — Non-linear function (ReLU, sigmoid) that enables the network to learn complex, non-linear patterns

- Backpropagation — The algorithm that calculates how each weight contributed to prediction error, enabling gradient-based updates

- Loss Function — Measures how far off predictions are from correct answers — MSE for regression, cross-entropy for classification

Neural Network with TensorFlow/Keras

import tensorflow as tf

# Build a simple neural network

model = tf.keras.Sequential([

tf.keras.layers.Dense(128, activation='relu', input_shape=(784,)),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(64, activation='relu'),

tf.keras.layers.Dense(10, activation='softmax')

])

model.compile(

optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['accuracy']

)

# Train

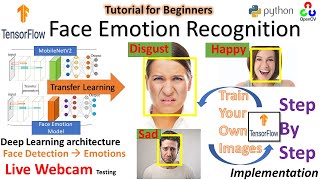

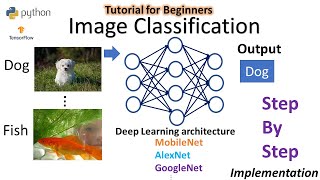

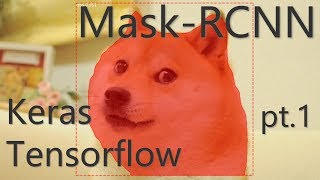

model.fit(X_train, y_train, epochs=10, validation_split=0.2)Learn Neural Network — Top Videos

17:56

17:56  1:19:27

1:19:27  42:36

42:36  13:33

13:33  13:49

13:49  1:14:22

1:14:22 Neural Network Educators

@tensorflow

Welcome to the official TensorFlow YouTube channel. Stay up to date with the latest TensorFlow news, tutorials, best pra...

@perfectwebsolutions

Perfect web solutions provides Quality Tutorials on Web Development, Web Design, using ( WordPress, Laravel, CodeIgniter...

@programming_hut

I make machine learning, deep learning project videos. So if you are a college student or learning machine learning then...

@deeplearning_by_phdscholar6925

This channel will contain tutorial videos regarding deep learning and computer vision concepts and implementation using ...

@antonbabenkolive

Your weekly dose of Terraform with news, reviews, Q&A, interviews, and live coding. PS: There is also Terraform Weekly ...

@techsimpluslearnings

Welcome to TechSimPlus Learnings - Your one-stop destination for mastering modern tech skills! WHAT YOU'LL LEARN: ✅ Ge...

Frequently Asked Questions

How many layers should a neural network have?

Start simple. For tabular data, 2-3 hidden layers often suffice. For images, use pre-trained CNNs like ResNet. For text, use pre-trained Transformers. Adding more layers isn't always better — it can cause overfitting.

Can neural networks learn anything?

In theory, a sufficiently large neural network can approximate any function (universal approximation theorem). In practice, they're limited by data quantity, data quality, compute resources, and architecture choices.

What's the difference between a neural network and deep learning?

A neural network is the model architecture. Deep learning is the practice of using deep (many-layer) neural networks. A 2-layer network isn't 'deep learning'; a 50-layer network is.

Want a structured learning path?

Plan a Neural Network Lesson →